31 Dec 2017

As part of working on my home-server setup, I wanted to move off few online services to ones that I manage. This is a list of what all services I used and what I’ve migrated to .

Why: I got frustrated with Google Play Music a few times. Synced songs would not show up across all clients immediately (I had to refresh,uninstall,reinstall), and I hated the client limits it would impose. Decided to try microG on my phone at the same time, and it slowly came together.

Email

I’ve been using email on my own domain for quite some time (captnemo.in), but it was managed by a Outlook+Google combination that I didn’t like very much.

I switched to Migadu sometime last year, and have been quite happy with the service. Their Privacy Policy, and pro/cons section on the website is a pleasure to read.

Why: Email is the central-point of your online digital identity. Use your own-domain, at the very least. That way, you’re atleast protected if Google decides to suspend your account. Self-hosting email is a big responsibility that requires critical uptime, and I didn’t want to manage that, so went with migadu.

Why Migadu: You should read their HN thread.

Caviats: They don’t yet offer 2FA, but hopefully that should be fixed soon. Their spam filters aren’t the best either. Migadu even has a Drawbacks section on their website that you must read before signing up.

Alternatives: RiseUp, FastMail.

Google Play Music

I quite liked Google Play Music. While their subscription offering is horrible in India, I was a happy user of their “bring-your-own-music” plan. In fact, the most used Google service on my phone happened to be Google Play Music! I switched to a nice subsonic fork called [AirSonic][airsonic], which gives me the ability to:

- Listen on as many devices as I want (Google has some limits)

- Listen using multiple clients at the same time

- Stream at whatever bandwidth I pick (I stream at 64kbps over 2G networks!)

I’m currently using Clementine on the Desktop (which unfortunately, doesn’t cache music), and UltraSonic on the phone. Airsonic even supports bookmarks, so listening to audiobooks becomes much more simpler.

Why: I didn’t like Google Play Music limits, plus I wanted to try the “phone-without-google” experiment.

Why AirSonic: Subsonic is now closed source, and the Libresonic developers forked off to AirSonic, which is under active development. It is supports across all devices that I use, while Ampache has spotty Android support.

Google Keep

I switched across to WorkFlowy, which has both a Android and a Linux app (both based on webviews). I’ve used it for years, and it is a great tool. Moreover, I’m also using DAVDroid sync for Open Tasks app on my phone. Both of these work well enough offline.

Why: I didn’t use Keep much, and WorkFlowy is a far better tool anyway.

Why WorkFlowy: It is the best note-taking/list tool I’ve used.

Phone

I switched over to the microG fork of lineageOS which offers a reverse-engineered implementation of the Google Play Services modules. It includes:

microG Core

Which talks to Google for Sync, Account purposes.

Why: Saves me a lot of battery. I can uninstall this, unlike Google Play Services.

Cons: Not all google services are supported very well. Push notifications have some issues on my phone. See the Wiki for Implementation Status.

UnifiedLP

Instead of the Google Location Provider. I use the Mozilla Location Services, along with Mozilla Stumbler to help improve their data.

Why: Google doesn’t need to know where I am.

Caviats: GALP (Google Assisted Location Provider) does GPS locks much faster in comparision. However, I’ve found the Mozilla Location Services coverage in Bangalore to be pretty good.

Maps

Stil looking for decent alternatives.

Uber

microG comes with a Google Maps shim that talks to Open Street Maps. The maps feature on Uber worked fine with that shim, however booking cabs was not always possible. I switched over to m.uber.com which worked quite well for some time.

Uber doesn’t really spend resources on their mobile site though, and it would ocassionaly stop working. Especially with regards to payments. I’ve switched over to the Ola mobile website, which works pretty well. I keep the OlaMoney app for recharging the OlaMoney wallet alongside.

Uber->Ola switch was also partially motivated by how-badly-run Uber is.

Most implementations support caldav/carddav for calendar/contacts sync. I’m using DAVDroid for syncing to a self-hosted Radicale Server.

Why: I’ve always had contacts synced to Google, so it was always my-single-source-of-truth for contacts. But since I’m on a different email provider now, it makes sense to move off those contacts as well. Radicale also lets me manage multiple addressbooks very easily.

Why Radicale: I looked around at alternatives, and 2 looked promising: Sabre.io, and Radicale. Sabre is no longer under development, so I picked Radicale, which also happened to have a regularly updated docker image.

Google Play Store

Switch to FDroid - It has some apps that Google doesn’t like, and some more. Moreover, you can use YALP Store to download any applications from the Play Store. You can even run a FDroid repository for the apps you use from Play Store, as an alternative. See this excellent guide on the various options.

Why: Play Store is tightly linked to Google Play Services, and doesn’t play nice with microG.

Why FDroid: FDroid has publicly verifiable builds, and tons of open-source applications.

Why Yalp: Was easy enough to setup.

If you’re looking to migrate to MicroG, I’d recommend going through the entire NO Gapps Setup Guide by shadow53 before proceeding.

LastPass

I’ve switched to pass along with a sync to keybase.

Why: LastPass has had multiple breaches, and a plethora of security issues (including 2 RCE vulnerabilities). Their fingerprint authentication on Android could be bypassed til recently. I just can’t trust them any more

Why pass: It is built on strong crypto primitives, is open-source, and has good integration with both i3 and firefox. There is also a LastPass migration script that I used.

Caviats: Website names are used as filenames in pass, so even though passwords are encrypted, you don’t want to push it to a public Git server (since that would expose the list of services you are using). I’m using my own git server, along with keybase git(which keeps it end-to-end encrypted, even branch names). You also need to be careful about your GPG keys, instead of a single master password.

GitHub

For bonus, I setup a Gitea server hosted at git.captnemo.in. Gitea is a fork of gogs, and is a single-binary go application that you can run easiy.

Just running it for fun, since I’m pretty happy with my GitHub setup. However, I might move some of my sensitive repos (such as this) to my own host.

Why Gitea: The other alternatives were gogs, and GitLab. There have been concerns about gogs development model, and GitLab was just too overpowered/heavy for my use case. (I’m using the home server for gaming as well, so it matters)

If you’re interested in my self-hosting setup, I’m using Terraform + Docker, the code is hosted on the same server, and I’ve been writing about my experience and learnings:

- Part 1, Hardware

- Part 2, Terraform/Docker

- Part 3, Learnings

- Part 4, Migrating from Google (and more)

- Part 5, Home Server Networking

- Part 6, btrfs RAID device replacement

If you have any comments, reach out to me

18 Dec 2017

Learnings

I forgot to do this on the last blog post, so here is the list:

- archlinux has official packages for intel-microcode-updates.

- wireguard is almost there. I’m running openvpn for now, waiting for the stable release.

- While

traefik is great, I’m concerned about the security model it has for connecting to Docker (uses the docker unix socket over a docker mounted volume, which gives it root access on the host). Scary stuff.

- Docker Labels are a great signalling mechanism. Update: After seeing multiple bugs with how

traefik uses docker labels, they have limited use-cases but work great in those. Don’t try to over-architect them for all your metadata.

- Terraform still needs a lot of work on their docker provider. A lot of updates destroy containers, which should be applied without needing a destroy.

- I can’t proxy gitea’s SSH authentication easily, since

traefik doesn’t support TCP proxying yet.

- The

docker_volume resource in terraform is useless, since it doesn’t give you any control over the volume location on the host. (This might be a docker limitation.)

- The

upload block inside a docker_container resource is a great idea. Lets you push configuration straight inside a container. This is how I push configuration straight inside the traefik container for eg:

upload {

content = "${file("${path.module}/conf/traefik.toml")}"

file = "/etc/traefik/traefik.toml"

}

Advice

This section is if you’re venturing into a docker-heavy terraform setup:

- Use

traefik. Will save you a lot of pain with proxying requests.

- Repeat the

ports section for every IP you want to listen on. CIDRs don’t work.

- If you want to run the container on boot, you want the following:

restart = "unless-stopped"

destroy_grace_seconds = 10

must_run = true

- If you have a single

docker_registry_image resource in your state, you can’t run terraform without internet access.

- Breaking your docker module into

images.tf, volumes.tf, and data.tf (for registry_images) works quite well.

- Memory limits on docker containers can be too contrained. Keep an eye on logs to see if anything is getting killed.

- Setup Docker TLS auth first. I tried proxying Docker over apache with basic auth, but it didn’t work out well.

MongoDB with forceful server restarts

Since my server gets a forceful restart every few days due to power-cuts (I’m still working on a backup power supply), I faced some issues with MongoDB being unable to recover cleanly. The lockfile would indicate a ungraceful shutdown, and it would require manual repairs, which sometimes failed.

As a weird-hacky-fix, since most of the errors were from the MongoDB WiredTiger engine itself, I hypothesized that switching to a more robust engine might save me from these manual repairs. I switched to MongoRocks, and while it has stopped the issue with repairs, the wiki stil doesn’t like it, and I’m facing this issue: https://github.com/Requarks/wiki/issues/313

However, I don’t have to repair the DB manually, which is a win.

SSHD on specific Interface

My proxy server has the following

And an associated Anchor IP for static IP usecases via Digital Ocean. (10.47.0.5, doesn’t show up in ifconfig).

I wanted to run the following setup:

eth0:22 -> sshdAnchor-IP:22 -> simpleproxy -> gitea:ssh

where gitea is the git server hosting git.captnemo.in. This way:

- I could SSH to the proxy server over 22

- And directly SSH to the Gitea server over 22 using a different IP address.

Unfortunately, sshd doesn’t allow you to listen on a specific interface, and since the eth0 IP is non-static I can’t rely on it.

As a result, I’ve resorted to just using 2 separate ports:

22 -> simpleproxy -> gitea:ssh

222 -> sshd

There are some hacky ways around this by creating a new service that boots SSHD after network connectivity, but I thought this was much more stable.

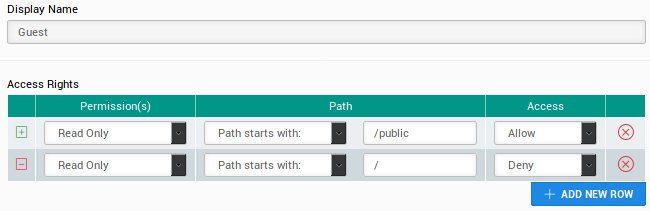

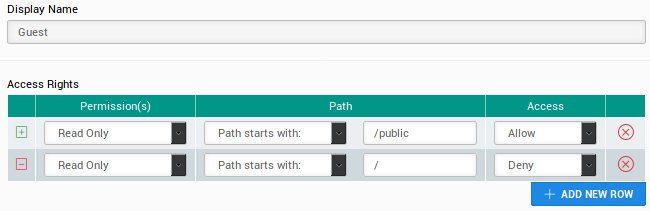

Wiki.js public pages

I’m using wiki.js setup at https://wiki.bb8.fun. A specific requirement I had was public pages, so that I could give links to people for specific resources that could be browser without a login.

However, I wanted the default to be authenticated, and only certain pages to be public. The config for this was surprisingly simple:

YAML config

You need to ensure that defaultReadAccess is false:

auth:

defaultReadAccess: false

local:

enabled: true

Guest Access

The following configuration is set for the guest user:

Now any pages created under the /public directory are now browseable by anyone.

Here is a sample page: https://wiki.bb8.fun/public/nebula

Docker CA Cert Authentication

I wrote a script that goes with the docker TLS guide to help you setup TLS authentication

OpenVPN default gateway client side configuration

I’m running a OpenVPN configuration on my proxy server. Howver, I don’t always want to use my VPN as the default route, only when I’m in an untrusted network. I still however, want to be able to connect to the VPN and use it to connect to other clients.

The solution is two-fold:

Server Side

Make sure you do not have the following in your OpenVPN server.conf:

push "redirect-gateway def1 bypass-dhcp"

Client Side

I created 2 copies of the VPN configuration files. Both of the them have identical config, except for this one line:

redirect-gateway def1

If I connect to the VPN config using this configuration, all my traffic is forwarded over the VPN. If you’re using Arch Linux, this is as simple as creating 2 config files:

/etc/openvpn/client/one.conf/etc/openvpn/client/two.conf

And running systemctl start openvpn-client@one. I’ve enabled my non-defaut-route VPN service, so it automatically connects to on boot.

If you’re interested in my self-hosting setup, I’m using Terraform + Docker, the code is hosted on the same server, and I’ve been writing about my experience and learnings:

- Part 1, Hardware

- Part 2, Terraform/Docker

- Part 3, Learnings

- Part 4, Migrating from Google (and more)

- Part 5, Home Server Networking

- Part 6, btrfs RAID device replacement

If you have any comments, reach out to me